Familiar

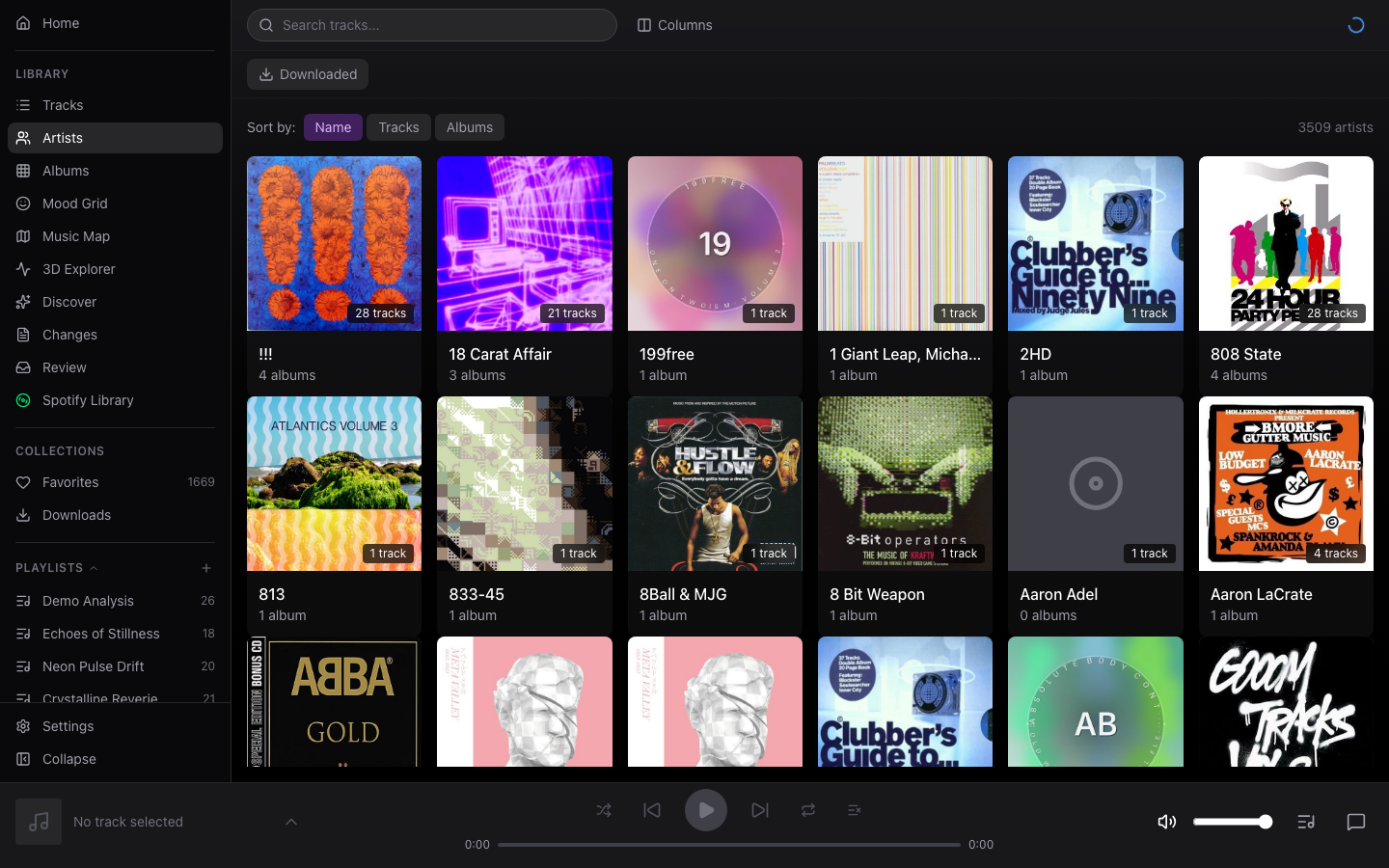

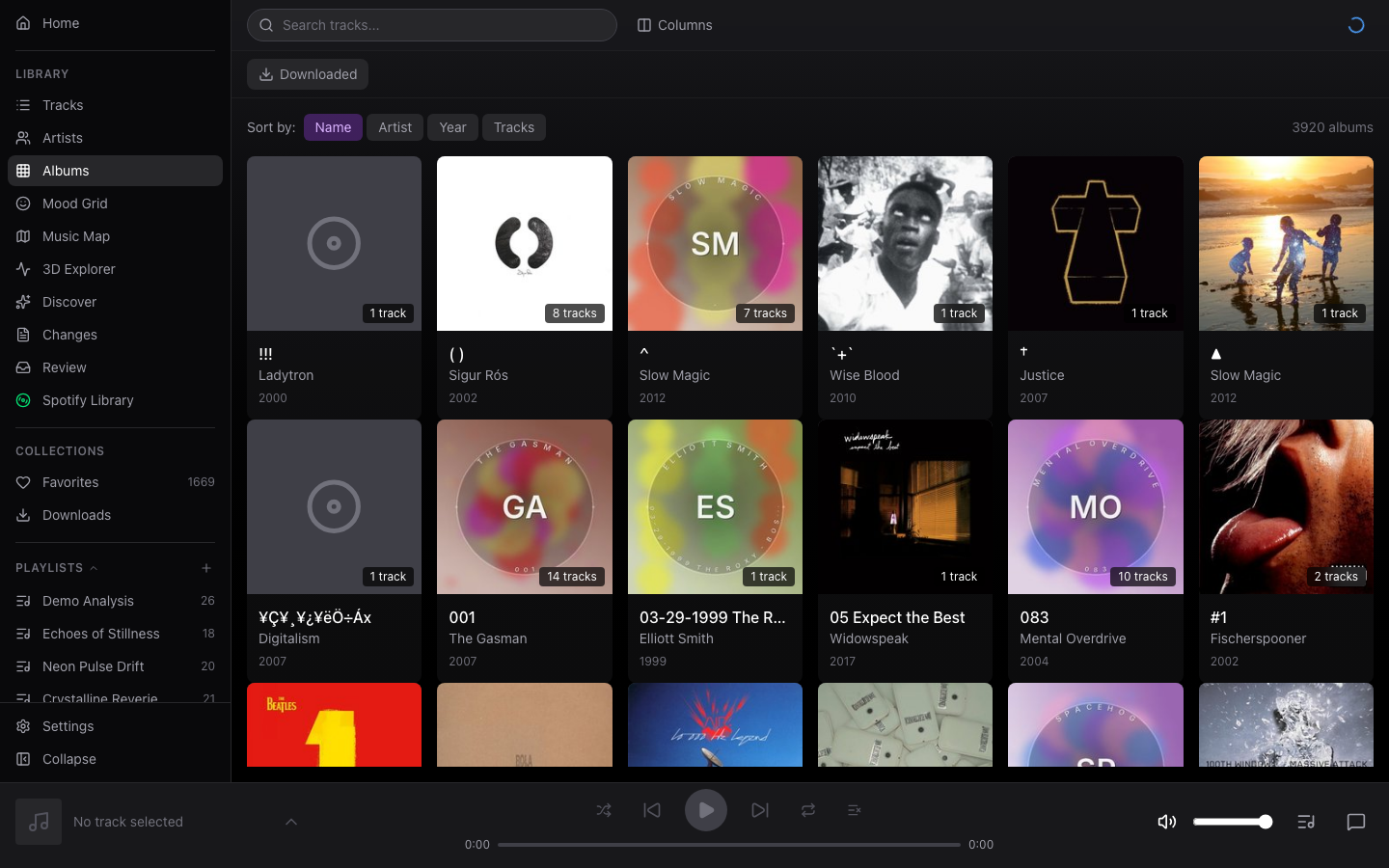

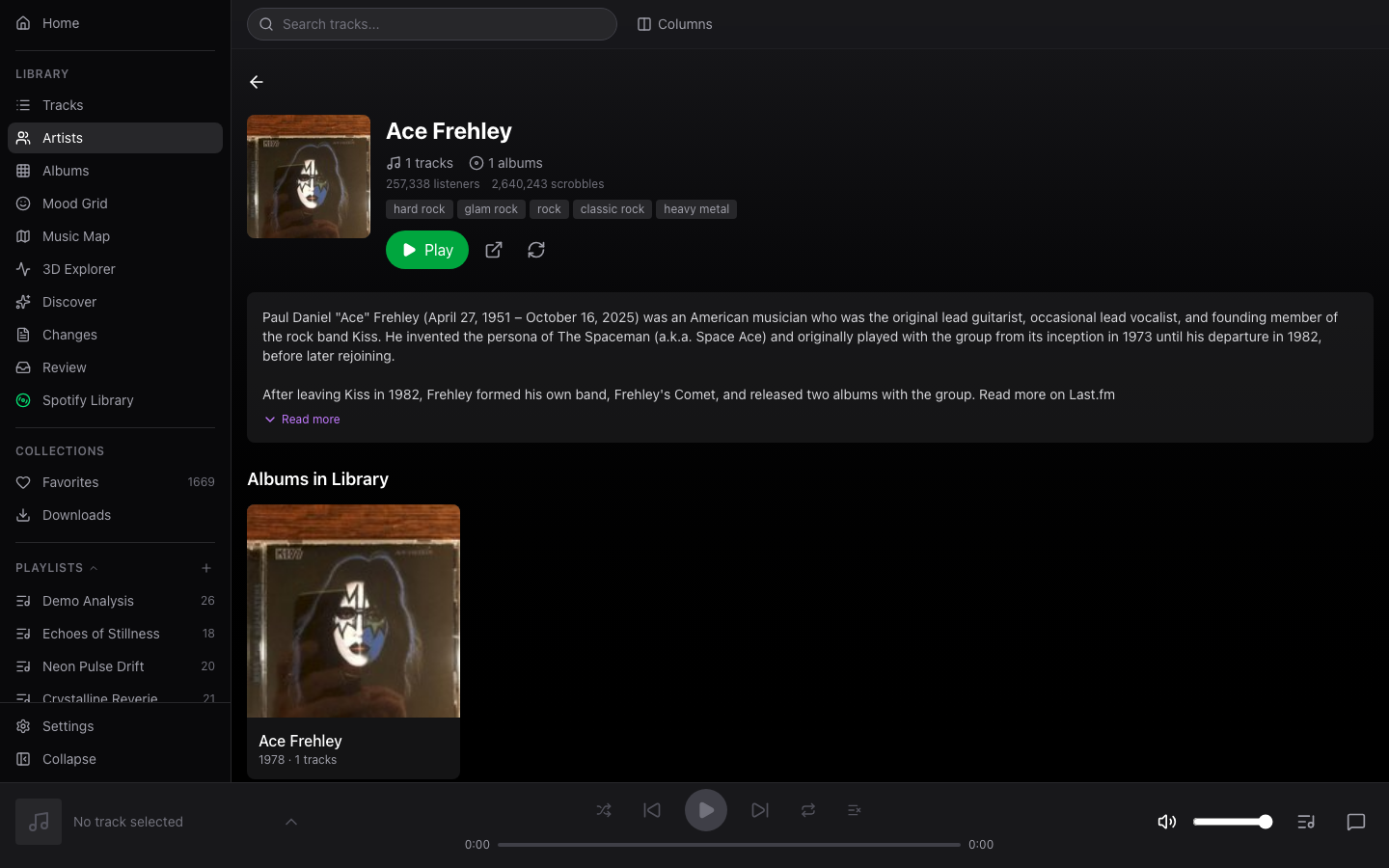

A self-hosted music player that understands the sound of your music. Describe what you want to hear — get a playlist from your own collection.

v0.1.0-alpha2 · 2026-04-17Describe what you want to hear. Familiar understands the sound of your music, not just its metadata. Ask for "something that sounds like rain on a window" and it actually works.

Your music, your server, your data. Runs entirely on your hardware — no cloud dependency, no subscriptions, no data leaving your network.

Community-powered analysis. Share anonymized audio fingerprints with other users. New installations benefit instantly from pre-computed analysis, skipping hours of processing.

How Familiar compares

| Familiar | Navidrome | Jellyfin | Plex | |

|---|---|---|---|---|

| AI chat + playlist creation | Yes | — | — | — |

| Semantic audio search | Yes (CLAP) | — | — | — |

| Audio feature analysis | BPM, key, energy, mood | — | Basic | Basic |

| Community analysis cache | Yes | — | — | — |

| Self-hosted / no cloud | Yes | Yes | Yes | Partial |

| Music video playback | Yes | — | Yes | Yes |

| Smart playlists | Rules-based | — | — | Yes |

| Mobile PWA | Yes | Web only | Web + apps | Apps |

Features

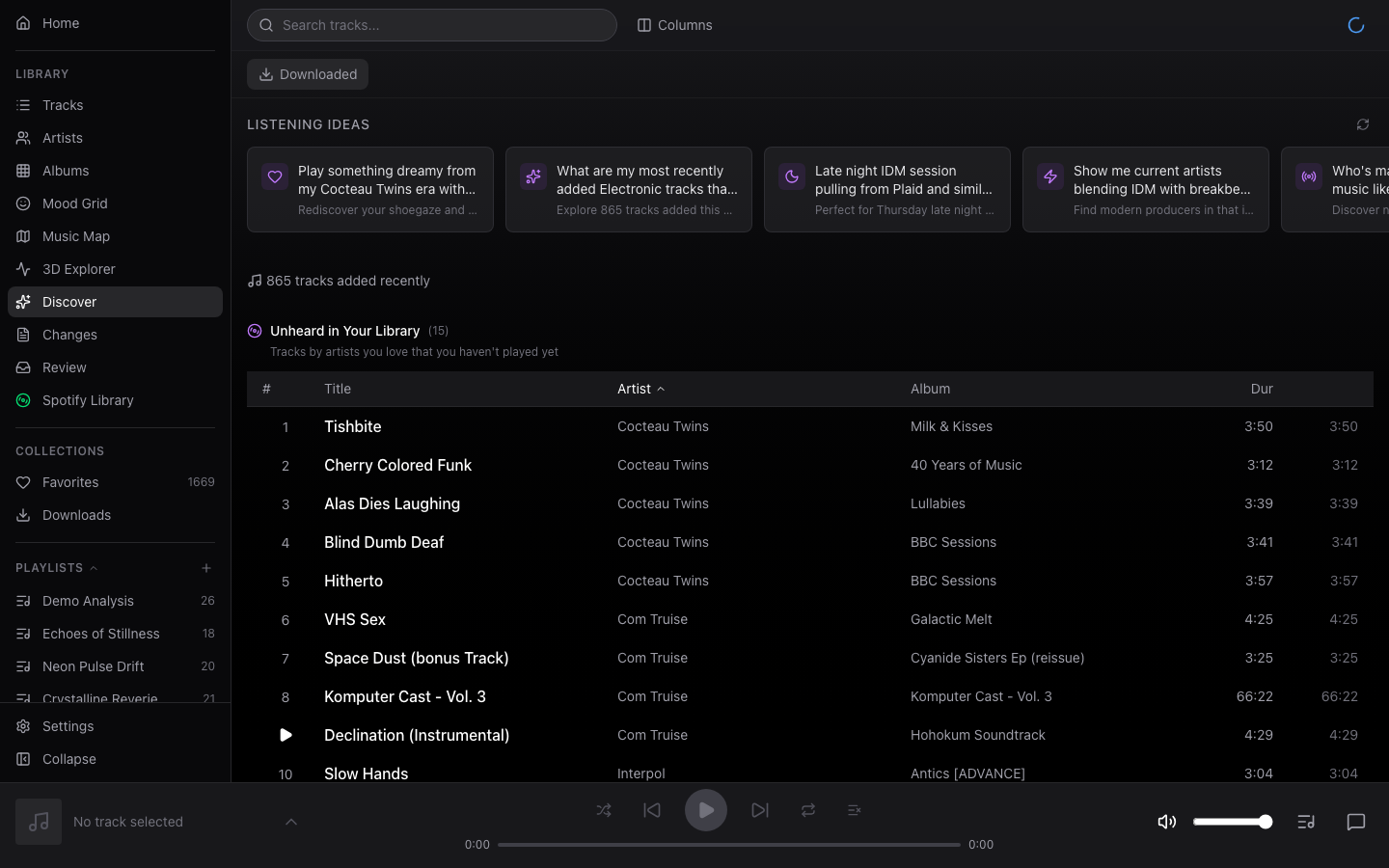

Discovery & Search

Semantic audio search

Describe the sound: "upbeat with synths", "acoustic and melancholy". Matches on CLAP audio embeddings, not tags.

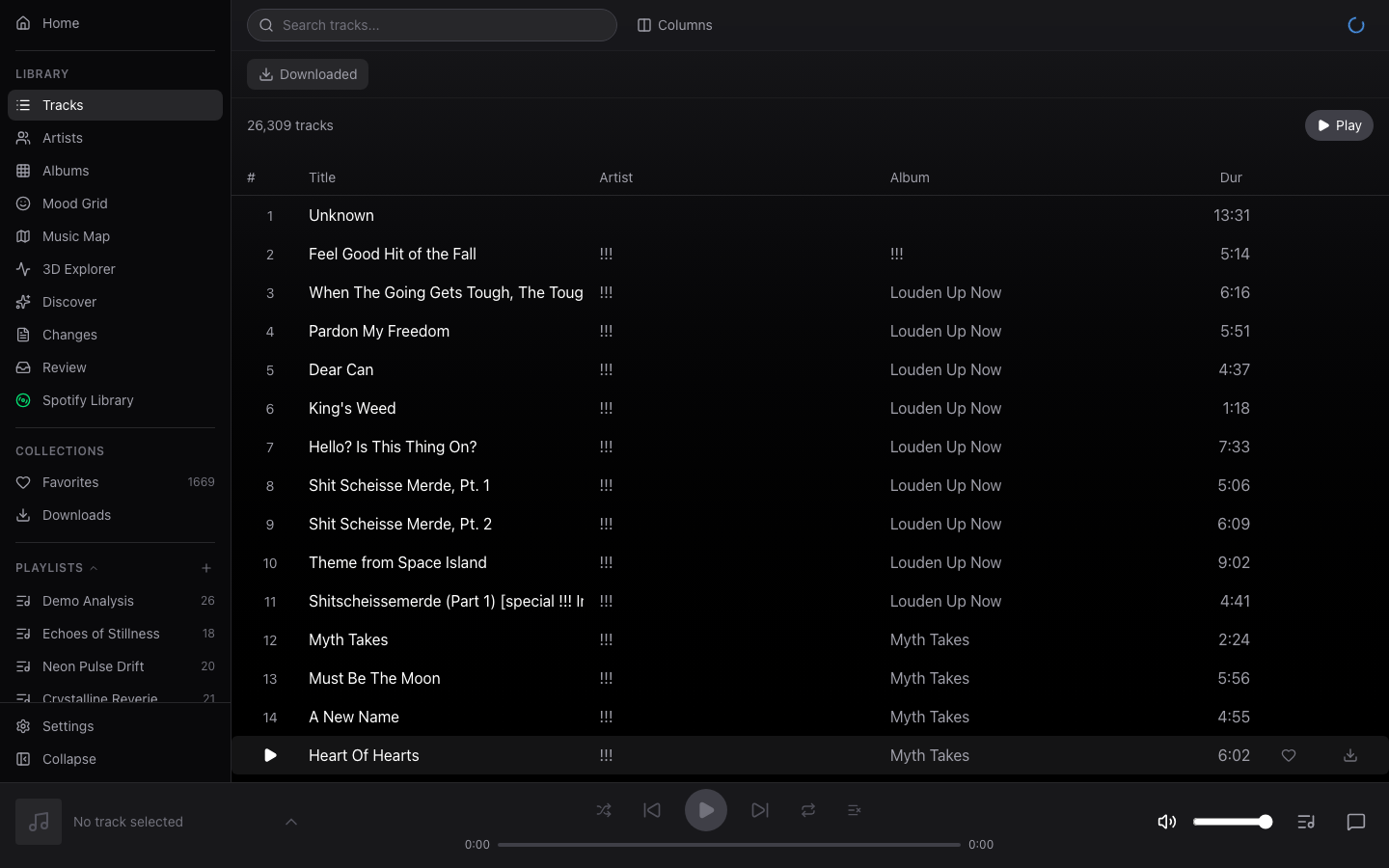

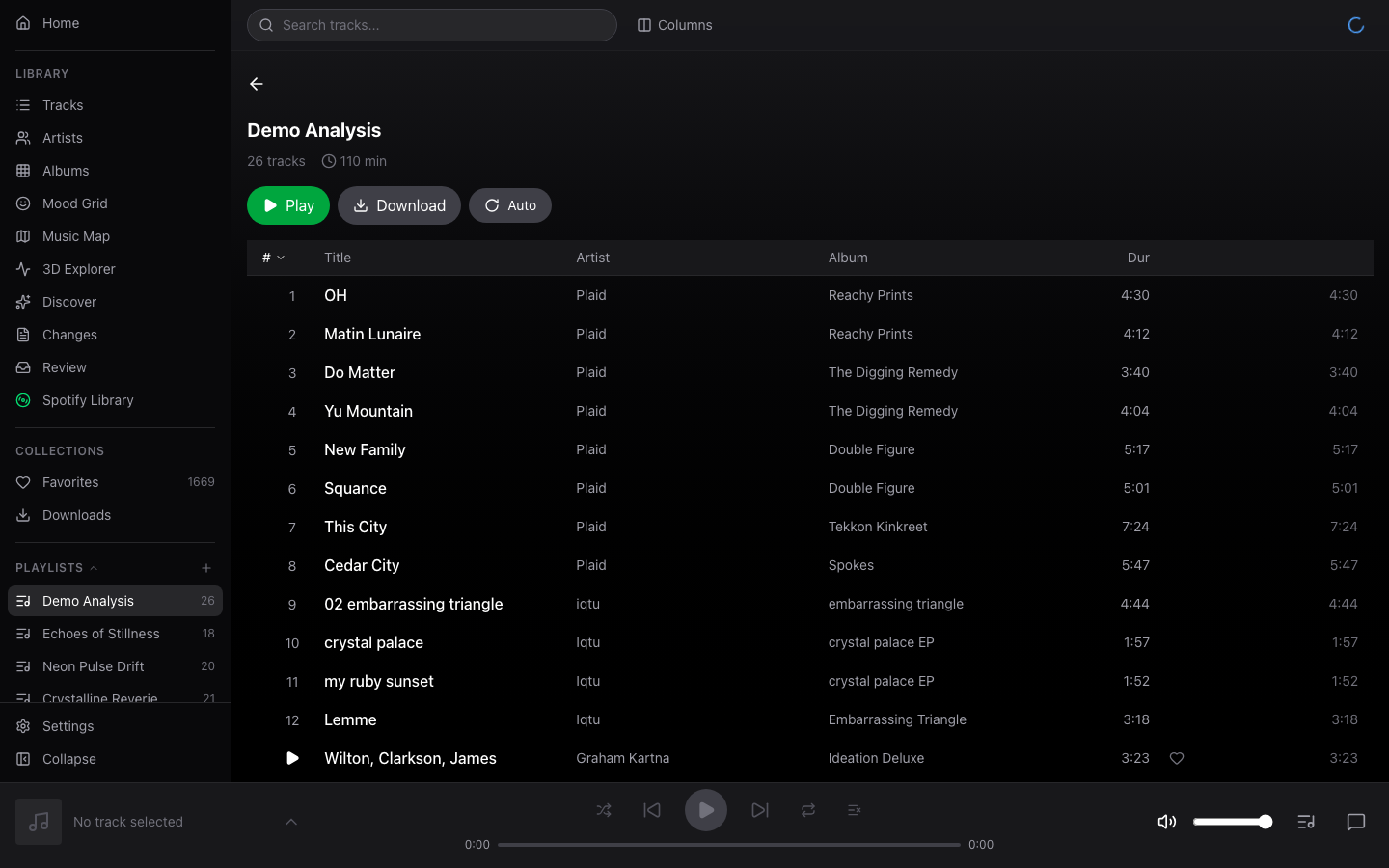

AI chat assistant

Claude with 27 tools for search, playback, metadata correction, and playlist creation.

Find similar

Click any track to surface sonically similar music from your library via vector similarity.

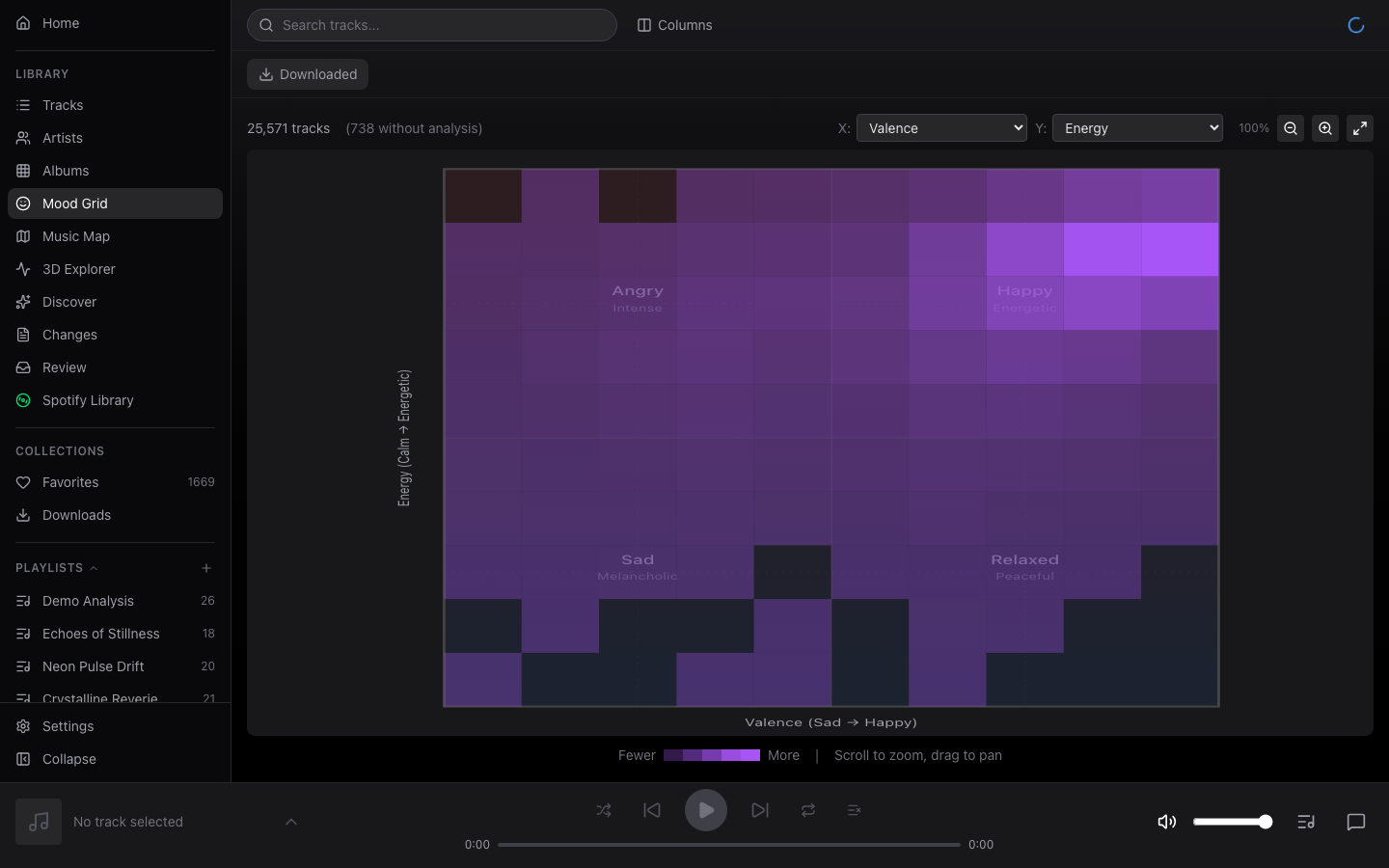

Mood Grid

2D scatter of your library by energy and valence — happy/sad × calm/energetic.

Music Map

Ego-centric similarity map. Click any artist to re-center the view.

3D Explorer

Navigate a 3D space of artists with hover-to-preview audio.

Audio Analysis

CLAP embeddings

512-dim audio embeddings from LAION's CLAP model. Powers semantic search and similarity.

Musical features

BPM, key, energy, valence, danceability, acousticness, instrumentalness — extracted via librosa.

Community cache

Opt-in sharing of analysis fingerprints. New libraries skip hours of extraction.

Playback & Library

Offline PWA

Web app installs to home screen. IndexedDB track cache for offline listening.

iOS TestFlight build

Native Capacitor wrapper with background audio, lock-screen controls, and CarPlay scaffolding.

Smart playlists

Rules-based auto-updating playlists. Combine tags, features, and listening history.

Music videos

Attach video files to tracks and play them back in the full player.

Ask Familiar…

Real prompts that map to real filters. The AI chat translates phrases like these into audio-feature queries across your library.

"Something chill for late-night coding"

energy < 0.4 · valence 0.3–0.6

"Upbeat and danceable"

energy > 0.6 · valence > 0.6 · danceability > 0.5

"Melancholy acoustic"

valence < 0.3 · acousticness > 0.4

"Dreamy / ambient"

energy < 0.3 · acousticness > 0.4 · dynamic_range_min < 10

"Jazzy with a loose live feel"

swing_min > 0.3 · tempo_character = "breathing"

"Beatmatch-safe house set"

tempo_cv_max = 0.05

"French lyrics only"

lyrics_language = "fr"

"Aggressive and bright"

energy > 0.8 · brightness > 0.6

Install

DISABLE_CLAP_EMBEDDINGS=true.

Pull the prebuilt image and start the stack

mkdir familiar && cd familiar

curl -LO https://raw.githubusercontent.com/seethroughlab/familiar/master/docker/docker-compose.prod.yml

curl -LO https://raw.githubusercontent.com/seethroughlab/familiar/master/docker/init-pgvector.sql

MUSIC_LIBRARY_PATH=~/Music docker compose -f docker-compose.prod.yml up -dTwo files, one compose up. Uses the multi-arch image at ghcr.io/seethroughlab/familiar:latest.

Open the UI

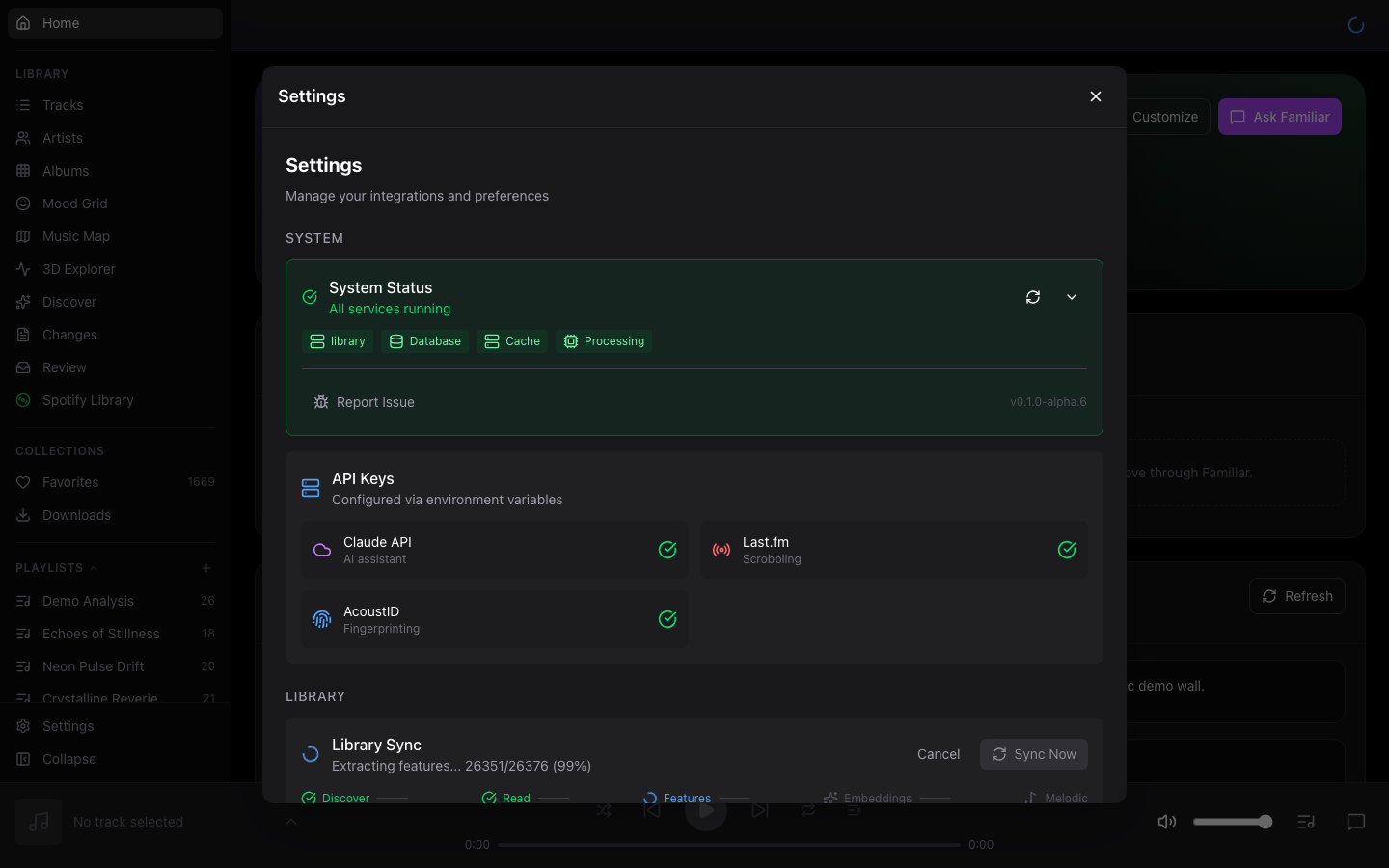

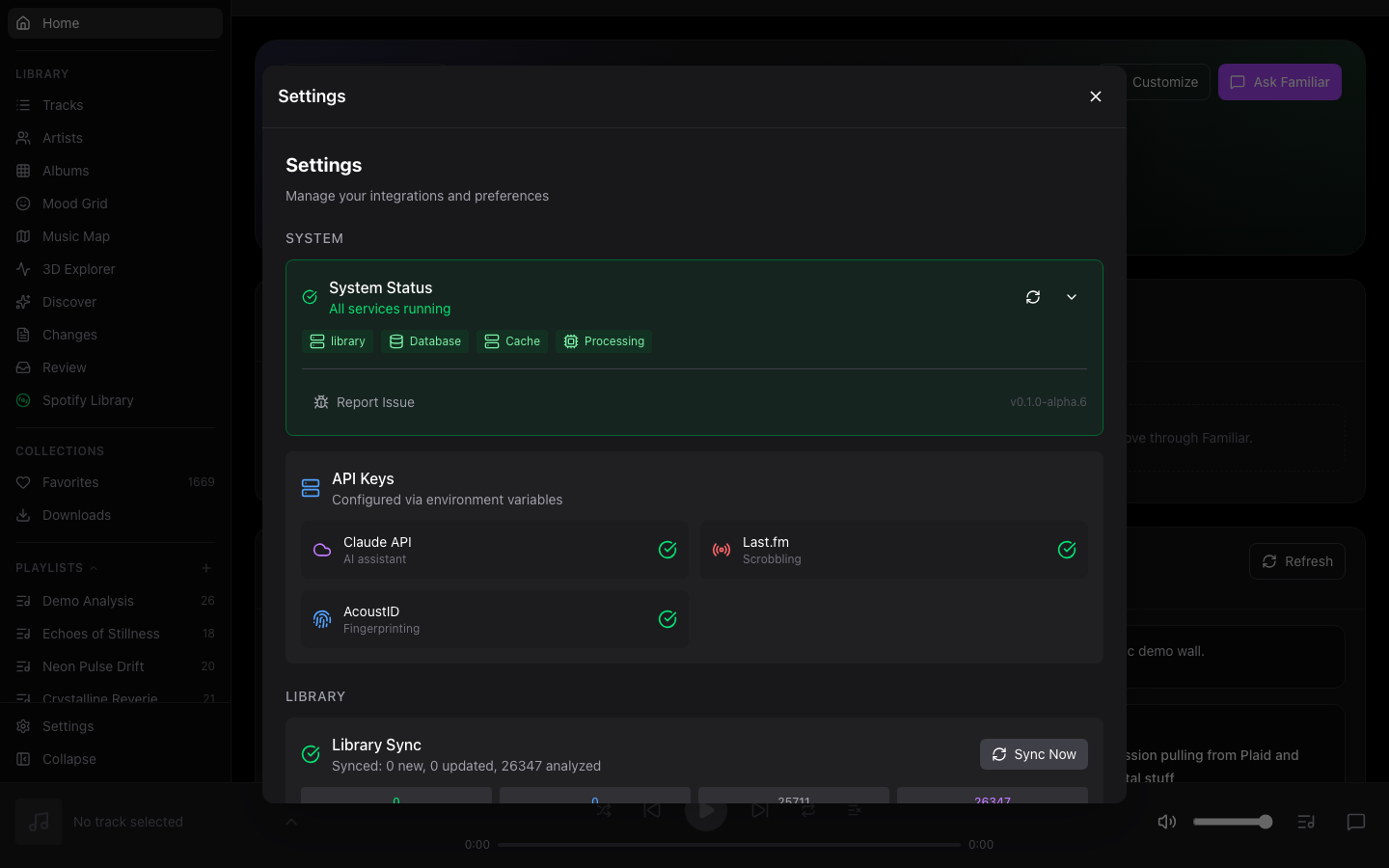

Visit http://localhost:4400. The admin page walks you through API keys (Anthropic for AI chat, optional Last.fm and AcoustID).

Scan your library

Point Familiar at a music folder in Settings → Library Management. It'll scan, fingerprint, and start extracting audio features in the background.

macOS? (journald swap + 8 GB RAM note)

The production compose uses journald logging (Linux-only). On macOS, add the override:

docker compose -f docker-compose.prod.yml -f docker-compose.macos.yml up -dSee the macOS guide for Apple Silicon notes and the ./start.sh helper script.

Build from source

git clone https://github.com/seethroughlab/familiar.git

cd familiar/docker

./start.shBuilds the image locally with platform detection and a health check. Useful when you're developing against the backend.

Detailed guides

- Installation GuideDocker, OpenMediaVault, Synology, dev setup

- macOS GuideDocker Desktop, Apple Silicon, library paths

- ConfigurationEnv vars, API keys, Tailscale HTTPS, backup

- Library BrowsersBuild custom 2D/3D visualizations

- REST APIBackend API reference

Listen from anywhere

The goal is a private URL you can reach from your phone on cellular — not a public site. Don't port-forward 4400 to the open internet.

Recommended: Tailscale

Install Tailscale on the server and each device you want to listen from, enable HTTPS certificates in the admin console, then run one command on the server:

tailscale serve --bg https / http://localhost:4400Visit https://<your-server>.<tailnet>.ts.net from any signed-in device — iPhone, laptop, TV. Tailscale provisions a Let's Encrypt cert automatically and renews it for you.

Why HTTPS matters: the iOS/Android PWA install prompt, service-worker caching, and "Add to Home Screen" only work over HTTPS. Plain http://10.0.0.42:4400 does not.

Also works with

- Cloudflare Tunnel — if you want a stable public hostname routed through Cloudflare without opening a port.

- WireGuard — roll your own VPN; point your phone at the VPN and use the LAN URL.

- Reverse proxy (nginx, Caddy, Traefik) on a box with a real domain and cert.

- Any VPN you already run — anything that puts your phone on the same network as the server will do.

FAQ

Do I need an API key?

Yes for the AI chat — Familiar uses the Anthropic Claude API, and queries typically cost a fraction of a cent. Basic library browsing, playback, search, Mood Grid, Music Map, and 3D Explorer all work without an API key.

What hardware do I need?

See the Requirements callout above. A small NAS works — the author runs a 23k-track library on an OpenMediaVault box. Analysis is the heaviest workload; once a track is analyzed it doesn't need to be re-analyzed.

Does my music or listening data leave my network?

No. The opt-in community cache shares only one-way SHA-256 hashes of audio fingerprints — no track titles, artists, file paths, or listening history. You cannot reconstruct a library from the hashes. AI chat queries go to Anthropic (because that's where Claude runs); your audio files never leave your server.

What happens if Claude is down?

The chat panel is disabled until the API comes back. Everything else — browsing, searching, playback, similarity, smart playlists — is local and keeps working.

How long does analysis take?

Roughly 1 second per track on modern hardware, so a 20,000-track library is around 6 hours. The community cache short-circuits tracks that have been analyzed elsewhere, which can reduce a cold-start scan to minutes for popular music.

Can I use the PWA on cellular / away from home?

Yes — see Listen from anywhere. Tailscale + HTTPS is the easiest path, and it's what unlocks "Add to Home Screen" on iOS and Android.

Is there an iOS app?

A Capacitor-based native build exists in packages/ios/ with background audio, lock-screen controls, and CarPlay scaffolding. Public TestFlight isn't open yet — for now, the PWA over Tailscale is the shipping path.

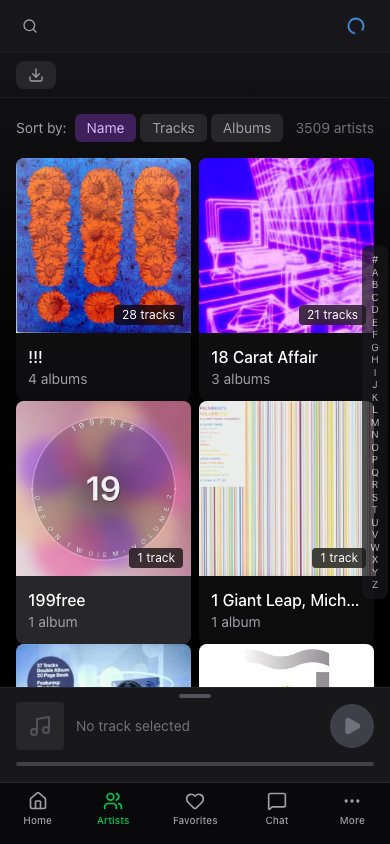

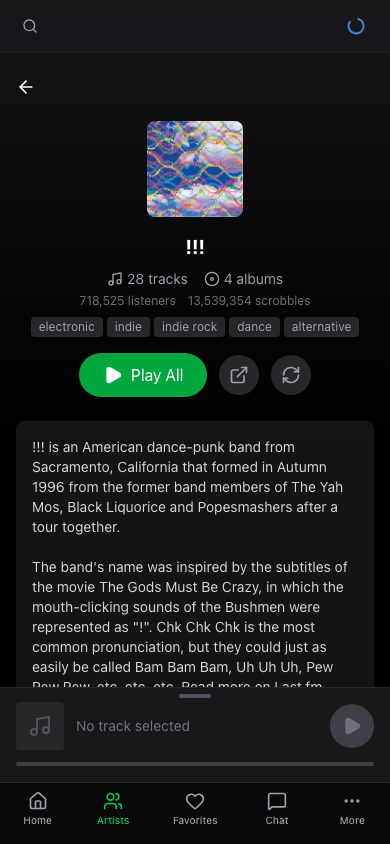

Screenshots

Mobile

Beta feedback

Familiar is in active development. Bug reports, feature requests, performance notes, and UI/UX suggestions are all welcome.